Return to computing page for the second course APMA0340

Return to Mathematica tutorial for the first course APMA0330

Return to Mathematica tutorial for the second course APMA0340

Return to the main page for the first course APMA0330

Return to the main page for the second course APMA0340

Return to Part IV of the course APMA0340

Introduction to Linear Algebra with Mathematica

Glossary

In certain cases, such as when a system of equations is large, iterative methods of solving equations such as Gauss--Seidel method are more advantageous. Elimination methods, such as Gaussian Elimination, are prone to round-off errors for a large set of equations whereas iterative methods allow the user to control round-off error. Also if the physics of the problem are well known, initial guesses needed in iterative methods can be made more judiciously for faster convergence.

Jacobi Iteration

We start with an example that clarifies the method.

Example: Consider the system of equations

| i | x_i | y_i | z_i |

| 0 | 2 | 1 | 0 |

| 1 | 2.75 | 1.875 | 0.4 |

| 2 | 3.0875 | 1.8375 | 0.9 |

| 3 | 2.94375 | 1.94062 | 0.9525 |

| 4 | 2.98219 | 1.99625 | 0.965 |

| 5 | 3.00688 | 1.99133 | 0.994938 |

| 6 | 2.99693 | 1.99638 | 0.997906 |

| 7 | 2.99871 | 1.99998 | 0.997939 |

| 8 | 3.00051 | 1.99955 | 0.999736 |

| 9 | 2.99984 | 1.99977 | 0.999921 |

| 10 | 2.99991 | 2.00001 | 0.999878 |

Do[{x[i + 1] = N[(9 + 2*y[i] - z[i])/4], y[i + 1] = N[(19 - 2*x[i] + 3*z[i])/8], z[i + 1] = N[(x[i] + 2*y[i] - 2)/5]}, {i, 0, 10}]

Do[Print["i=", i, " x= ", x[i], " y= ", y[i], " z= ", z[i]], {i, 0, 10}]

This process is called Jacobi iteration and can be used to solve certain types of linear systems. it is named after the German mathematician Carl Gustav Jacob Jacobi (1804--1851), who made fundamental contributions to elliptic functions, dynamics, differential equations, and number theory. His first name is sometimes given as Karl and he was the first Jewish mathematician to be appointed professor at a German university.

Linear systems with as many as 100,000 or more variables often arise in the solutions of partial differential equations (Chapter 7). The coefficient matrices for these systems are sparse; that is, a large percentage of the entries of the coefficient matrix are zero. An iterative process provides an efficient method for solving these large systems.

Sometimes the Jacobi mathod does not work. Let us experiment with the following system of equations.

Example: Consider the system of equations

| i | x_i | y_i | z_i |

| 0 | 2 | 1 | 0 |

| 1 | 4.5 | -1 | -6 |

| 2 | 15.5 | -36 | 2.5 |

| 3 | -19 | -15.5 | -12.5 |

| 4 | 21.25 | -21.5 | -144. |

| 5 | 281.25 | -759.5 | 45.25 |

| 6 | -466.25 | -333.25 | -130.75 |

| 7 | 98.875 | 281.75 | -3015.75 |

| 8 | 6176.38 | -15273.5 | 1039.88 |

| 9 | -9712.5 | -7150.38 | 316.875 |

| 10 | -4204.94 | 21012.4 | 62881.3 |

The last example shows that we need some criterion to determine whether the Jacobi iteration will converge. Hence, we make the following definition.

Theorem (Jacobi iteration): Suppose that A is a strictly diagonally dominant matrix. Then A x = b has a unique solution x = p. Iteration using Jacobi formula

Seidel Method

Gauss-Seidel method is used to solve a set of simultaneous linear and nonlinear equations, A x = b, where \( {\bf A} = \left[ a_{i,j} \right] \) is a square \( n \times n \) matrix, x is unknown n-vector, and b is a given n-vector. If b depends on x, we will write it as \( {\bf b} = {\bf f} ({\bf x}) . \) We consider first the case when b is a given vector not depending on x.

Example: The system of equations

Do[{{xp = N[(9 + 2 y[k] - z[k])/4], yp = N[(19 - 2 xp + 3 z[k])/8],

zp = N[(xp + 2 yp - 2)/5]}, {x[k + 1] = xp, y[k + 1] = yp, z[k + 1] = zp}}, {k, 0, 10}]

Do[Print["i=", i, " x= ", x[i], " y= ", y[i], " z= ", z[i]], {i, 0, 10}]

| k | x_k | y_k | z_k |

| 0 | 2 | 1 | 0 |

| 1 | 2.75 | 1.6875 | 0.825 |

| 2 | 2.8875 | 1.9625 | 0.9625 |

| 3 | 2.99063 | 1.98828 | 0.993438 |

| 4 | 2.99578 | 1.99859 | 0.998594 |

| 5 | 2.99965 | 1.99956 | 0.999754 |

| 6 | 2.99984 | 1.99995 | 0.999947 |

| 7 | 2.99999 | 1.99995 | 0.999947 |

| 8 | 2.99999 | 1.99998 | 0.999991 |

| 9 | 3. | 2. | 1. |

| 10 | 3. | 2. | 1. |

The linear equation A x = b can be rewritten as

The following are the input parameters to begin the simulation. The user can change those values that are highlighted and the worksheet will calculate an approximate solution to the system of equations.

- Number of equations, n;

n=4

- The \( n \times n \) coefficient matrix A. Note that if the coefficient matrix is diagonally dominant, convergence is ensured. Otherwise, the solution may or may not converge.

A = Table[{{10, 3, 4, 5}, {2, 24, 2, 4}, {2, 2, 34, 3}, {2, 2, 2, 12}}]; A // MatrixForm

- the right hand side array

RHS = Table[{22, 32, 41, 18}]; RHS // MatrixForm

- the initial guess of the solution vector x:

x = Table[{1, 23, 4, 50}]; x // MatrixForm

- maximum number of iterations,

maxit = 5

For[k = 1, k <= maxit, k++,

Print["Iteration Number"];

Print[k];

Print["Previous iteration values of the solution vector"];

Xold = x;

Print[Xold];

For[i = 1, i <= n, i++, summ = 0;

For[j = 1, j <= n, j++,

If[i != j, summ = summ + A[[i, j]]*x[[j]]]];

x[[i]] = N[(RHS[[i]] - summ)/A[[i, i]]]];

Maxabsea = 0.0;

For[i = 1, i <= n, i++,

absea[[i]] = Abs[(x[[i]] - Xold[[i]])/x[[i]]]*100.0;

If[absea[[i]] > Maxabsea, Maxabsea = absea[[i]]]];

Print["New iterative values of the solution vector"];

Print[x];

Print["Absolute percentage relative approximate error"];

Print[absea];

Print["Maximum absolute percentage relative approximate error"];

Print[Maxabsea];

Print[" "]; Print["__________________________________________"]; Print[" "];]

abset = Array[0, n];

Maxabset = 0.0;

For[i = 1, i <= n, i++, abset[[i]] = Abs[(B[[i]] - x[[i]])/B[[i]]]*100.0;

If[Maxabset <= abset[[i]], Maxabset = Abs[abset[[i]]]]];

Print["Exact Answer"]

Print[B]

Print[" "]; Print["_______________________________________"]; Print[" "];

Print["Last Iterative Value"]

Print[x]

Print[" "]; Print["_______________________________________"]; Print[" "];

Print["Absolute percentage relative true error"]

Print[abset]

Print[" "]; Print["_______________________________________"]; Print[" "];

Print["Maximum absolute percentage relative true error"]

Print[Maxabset]

Print[" "]; Print["_______________________________________"]; Print[" "];

Print["Maximum absolute percentage relative approximate error"]

Print[Maxabsea]

Wegstein Algorithm

In 1958, J.H. Wegstein proposed (Comm ACM, 1, 9, 1958) another method for solving

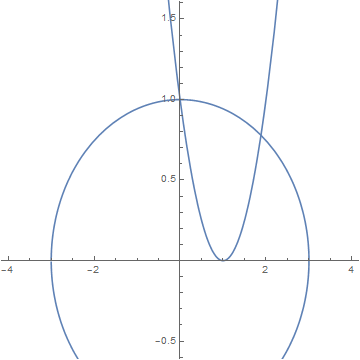

We extend the methods studied previously to the case of systems of nonlinear equations. Let us start with planar case and consider two functions

b = Plot[y /. Solve[x^2 + 9*y^2 - 9 == 0], {x, -4, 4}, AspectRatio -> 1]

Show[a, b, PlotRange -> {-0.5, 1.5}]

Fixed Point Iteration

We consider the problem \( {\bf x} = {\bf g} \left( {\bf x} \right) , \) where \( {\bf g}: \, \mathbb{R}^n \mapsto \mathbb{R}^n \) is the function from n-dimensional space into the same space. The fixed-point iteration is defined by selecting a vector \( {\bf x}_0 \in \mathbb{R}^n \) and defining a sequence \( {\bf x}_{k+1} = {\bf g} \left( {\bf x}_k \right) , \quad k=0,1,2,\ldots . \)

A point \( {\bf x} \in \mathbb{R}^n \) is a fixed point of \( {\bf g} \left( {\bf x} \right) \) if and only if \( {\bf x} = {\bf g} \left( {\bf x} \right) . \) A mapping \( {\bf f} \left( {\bf x} \right) \) is a contractive mapping if there is a positive number L less than 1 such that

Theorem: Let \( {\bf g} \left( {\bf x} \right) \) be a continuous function on \( \Omega = \left\{ {\bf x} = (x_1 , x_2 , \ldots x_n ) \, : \ a_i < x_i < b_i \right\} \) such that

- \( {\bf g} \left( \Omega \right) \subset \Omega ; \)

- \( {\bf g} \left( {\bf x} \right) \) is a contraction.

- g has a fixed point \( {\bf x} \in \Omega \) and the sequence \( {\bf x}_{k+1} = {\bf g} \left( {\bf x}_k \right) , \quad k=0,1,2,\ldots ; \quad {\bf x}_0 \in \Omega \) converges to x;

- \( \| {\bf x}_k - {\bf x} \| \le L^k \,\| {\bf x}_0 - {\bf x} \| . \) ■

Example:

Example. We apply the fixed point iteration to find the roots of the system of nonlinear equations

Do[{x[k + 1] = N[(x[k]^2 - y[k] + 1)/2], y[k + 1] = N[(x[k]^2 + 9*y[k]^2 - 9 + 18*y[k])/18]}, {k, 0, 50}]

Do[Print["i=", i, " x= ", x[i], " y= ", y[i]], {i, 0, 10}]

Do[{y[k + 1] = N[(x[k] - 1)^2], x[k + 1] = N[((x[k] + 1)^2 + 9*y[k]^2 - 10)/2]}, {k, 0, 10}]

Do[Print["i=", i, " x= ", x[i], " y= ", y[i]], {i, 0, 10}]

| k | x_k | y_k | k | x_k | y_k | |

| 0 | 0 | 0.5 | 0 | 0 | 0.5 | |

| 1 | 0.25 | 0.125 | 1 | -3.375 | 1 | |

| 2 | 0.46875 | -0.363715 | 2 | 2.32031 | 19.1406 | |

| 3 | 0.791721 | -0.785364 | 3 | 1649.15 | 1.74323 | |

| 4 | 1.20609 | -0.942142 | 4 | 1.3615*1010 | 2.71639*106 | |

| 5 | 1.6984 | -0.917512 | 5 | 3.41314*1013 | 1.85369*1012 | |

| 6 | 2.40104 | -0.836344 | 6 | 5.97938*1026 | 1.16495*1027 | |

| 7 | 3.80067 | -0.666331 | ||||

| 8 | 8.0557 | -0.141829 | ||||

| 9 | 33.0181 | 2.9734 |

If we use the starting value (1.5, 0.5), then

| k | x_k | y_k | k | x_k | y_k | |

| 0 | 1.5 | 0.5 | 0 | 1.5 | 0.5 | |

| 1 | 1.375 | 0.25 | 1 | -0.75 | 0.25 | |

| 2 | 1.32031 | -0.113715 | 2 | -4.6875 | 3.0625 | |

| 3 | 1.42847 | -0.510404 | 3 | 44.0039 | 32.3477 | |

| 4 | 1.77547 | -0.766785 | 4 | 5716.34 | 1849.34 | |

| 5 | 2.45953 | -0.797679 | 5 | 3.17342056*107 | 3.26652*107 | |

| 6 | 3.92349 | -0.643461 | 6 | 5.3050884*1015 | 1.00706*1015 | |

| 7 | 8.5186 | -0.0812318 | 7 | 1.86357*1031 | 2.8144*1031 | |

| 8 | 36.8239 | 3.45355 | 8 | |||

| 9 | 676.774 | 84.2505 | 9 |

We want to determine why our iterative equations were not suitable for finding the solution near both fixed points (0, 1) and (1.88241, 0.778642). To answer this question, we need to evaluate the Jacobian matrix:

Theorem: Assume that the functions f(x,y) and g(x,y) and their first partial derivatives are continuous in a region that contain the fixed point (p,q). If the starting point is chosen sufficiently close to the fixed point and if

Seidel Method

An improvement analogous to the Gauss-Seiel method for linear systems, of fixe-point iteration can be made. Suppose we try to solve the system of two nonlinear equations

Wegstein Algorithm

Let \( {\bf g} \, : \, \mathbb{R}^n \mapsto \mathbb{R}^n \) be a continuous function. Suppose we are given a system of equations

In planar case, we have two equations

Example:

Example. We apply the Wegstein algorithm to find the roots of the system of nonlinear equations

Newton's Methods

The Newton methods have problems with the initial guess which, in general, has to be selected close to the solution. In order to avoid this problem for scalar equations we combine the bisection and Newton's method. First, we apply the bisection method to obtain a small interval that contains the root and then finish the work using Newton’s iteration.

For systems, it is known the global method called the descent method of which Newton’s iteration is a special case. Newton’s method will be applied once we get close to a root.

Let us consider the system of nonlinear algebraic equations \( {\bf f} \left( {\bf x} \right) =0 , \) where \( {\bf f} \,:\, \mathbb{R}^n \mapsto \mathbb{R}^n , \) and define the scalar multivariable function

- \( \phi ({\bf x}) \ge 0 \) for all \( {\bf x} \in \mathbb{R}^n . \)

- If x is a solution of \( {\bf f} \left( {\bf x} \right) =0 , \) then φ has a local minimum at x.

- At an arbitrary point x0, the vector \( - \nabla \phi ({\bf x}_0 ) \) is the direction of the most rapid decrease of φ.

- φ has infinitely many descent directions.

- A direction u is descent direction for φ at x if and only if \( {\bf u}^T \nabla \phi ({\bf x}) < 0 . \)

- Special descent directions:

(a) Steepest descent method: \( {\bf u}_k = -JF^T ({\bf x}_k )\, {\bf f} ({\bf x}_k ) , \)

(b) Newton’s method: \( {\bf u}_k = -JF^{-1} ({\bf x}_k )\, {\bf f} ({\bf x}_k ) . \) ■

For scalar problems \( f (x) =0 , \) the descent direction is given by \( - \phi' (x) = -2\,f(x) \,f' (x) \) while Newton’s method yields \( -f(x) / f' (x) \) which has the same sign as the steepest descent method.

The question that arises for all descent methods is how far should one go in a given descent direction. To answer this question, there are several techniques and conditions to guarantee convergence to a minimum of φ. For a detailed discussion consult the book by

Numerical Methods for Unconstrained Optimization and Nonlinear Equations, by J. E. Dennis, Jr. and Robert B. Schnabel. Published: 1996, ISBN: 978-0-89871-364-0http://www.math.vt.edu/people/adjerids/homepage/teaching/F09/Math5465/chapter2.pdf

Return to Mathematica page

Return to the main page (APMA0340)

Return to the Part 1 Matrix Algebra

Return to the Part 2 Linear Systems of Ordinary Differential Equations

Return to the Part 3 Non-linear Systems of Ordinary Differential Equations

Return to the Part 4 Numerical Methods

Return to the Part 5 Fourier Series

Return to the Part 6 Partial Differential Equations

Return to the Part 7 Special Functions