Preface

This is a tutorial made solely for the purpose of education and it was designed for students taking Applied Math 0330. It is primarily for students who have very little experience or have never used Mathematica before and would like to learn more of the basics for this computer algebra system. As a friendly reminder, don't forget to clear variables in use and/or the kernel.

Finally, the commands in this tutorial are all written in bold black font, while Mathematica output is in normal font. This means that you can copy and paste all commands into Mathematica, change the parameters and run them. You, as the user, are free to use the scripts for your needs to learn the Mathematica program, and have the right to distribute this tutorial and refer to this tutorial as long as this tutorial is accredited appropriately.

Return to computing page for the second course APMA0340

Return to Mathematica tutorial for the second course APMA0330

Return to Mathematica tutorial for the first course APMA0340

Return to the main page for the course APMA0340

Return to the main page for the course APMA0330

Return to Part II of the course APMA0330

Existence and Uniqueness

Theorem: Suppose that f(x,y) is a continuous function defined in some rectangular region

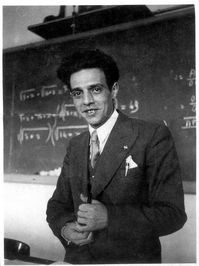

This theorem was proved in 1886 by the Italian mathematician Giuseppe Peano (1858--1932). Giuseppe Peano. Giuseppe Peano was a founder of symbolic logic whose interests centred on the foundations of mathematics and on the development of a formal logical language. In 1890 Peano founded the journal Rivista di Matematica, which published its first issue in January 1891. In 1891, Peano started the Formulario Project. It was to be an "Encyclopedia of Mathematics", containing all known formulae and theorems of mathematical science using a standard notation invented by Peano.

In addition to his teaching at the University of Turin, Peano lectured at the Military Academy in Turin in 1886. The following year he discovered, and published, a method for solving systems of linear differential equations using successive approximations. However Émile Picard had independently discovered this method and had credited the German mathematician Hermann Schwarz (1843--1921) with discovering the method first. In 1888 Peano published the book Geometrical Calculus which begins with a chapter on mathematical logic.

Theorem: Let f(y) be a continuous function on the closed interval [a,b] that has one null \( y^{\ast} \in (a,b) , \) namely, \( f(y^{\ast} ) =0 \) and \( f(y) \ne 0 \) for all other points \( y \in (a,b) . \) If the integral

Theorem: Suppose that f(x,y) is uniformly Lipschitz continuous in y (meaning the Lipschitz constant L in the inequality \( |f(x,y_1 ) - f(x, y_2 )| \le L\,|y_1 - y_2 | \) can be taken independent of x) and continuous in x. Then, for some positive value δ there exists a unique solution \( y = \phi (x) \) to the initial value problem

Theorem: Let f(x,y) be continuous for all (x,y) in open rectangle \( R= \left\{ (x,y)\,:\, |x-x_0 | < a, \quad |y- y_0 | < b \,\right\} \) and Lipschitz continuous in y, with constant L independent of x. Then there exists a unique solution to the initial value problem

Theorem: Suppose that f(x,y) and \( \frac{\partial f}{\partial y} \) are continuous functions defined in some rectangular region

Corollary: The continuous dependence of the solutions on the initial conditions holds whenever slope function f satisfies a global Lipschitz condition.

Corollary: If the solution y(x) of the initial value problem \( y' = f(x,y), \ y(x_0 )= y_0 \) has an a priori bound M, i.e., \( |y(x)| \le M \) whenever y(x) exists, then the solution exists for all \( x \in \mathbb{R} . \)

Example. Consider the initial value problem

q2 = Plot[y = 0, {x, -3.5, 3.5}, PlotStyle -> {Thick, Black}] (* singular solution *)

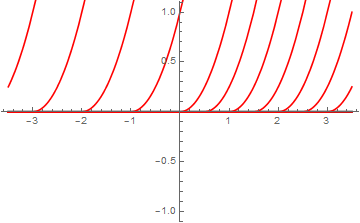

graph4[CC_] :=

Module[{}, Plot[Evaluate[q[x, CC]], {x, -3.5, 3.5}, AxesLabel -> {x, y},

PlotRange -> {{-3.5, 3.5}, {-0.5, 6}}, AspectRatio -> 1, DisplayFunction -> Identity,

PlotStyle -> RGBColor[1, 0, 0]]]

initlist = {0, 0.5, 1, 1.5, 2, 2.5, 3, 3.5, 4, -1, -2, -3, -4};

Module[{i, newgraph}, graphlist = {}; Do[CC = initlist[[i]];

newgraph = graph4[CC];

graphlist = Append[graphlist, newgraph], {i, 1, Length[initlist]}]]

solgraph =

Show[q2, graphlist, {PlotStyle -> {Black, Thick}, {DisplayFunction -> $DisplayFunction}}]

In this sequence of command, I am first entering the family of solutions to the differential equation. Since using C is prohibited in Mathematica, we use CC instead. Then we use two subroutines, one for plotting solutions, and another one for looping with respect to constant C, Finally, we display all graphs.

We can also check that the given initial value problem has multiple solutions by evaluating integral

Example. Consider the initial value problem for the Riccati equation

Plotting Solutions to ODEs

Direction Fields

Separable Equations

Equations Reducible to the Separable Equations

Equations with Linear Fractions

Exact Equations

Integrating Factors

Linear Equations

Bernoulli Equations

Riccati Equations

Existence and Uniqueness

Qualitative Analysis

Orthogonal Trajectories

Population Models

Applications

Return to Mathematica page

Return to the main page (APMA0330)

Return to the Part 1 (Plotting)

Return to the Part 2 (First Order ODEs)

Return to the Part 3 (Numerical Methods)

Return to the Part 4 (Second and Higher Order ODEs)

Return to the Part 5 (Series and Recurrences)

Return to the Part 6 (Laplace Transform)

Return to the Part 7 (Boundary Value Problems)